In 2017, I made a virtual reality (VR), live action series with a friend (who I worked with at Pocket Gems) who was the creative visionary and conceived the idea. I was new to both VR and filmmaking and this was a cool opportunity to learn a lot in a time boxed project (8 months) with a concrete deliverable.

We made a 4-part series called ‘Playback‘, which was fully funded by Oculus Studios (Facebook). Over 15k users downloaded and watched the series and it was one of Oculus’s featured projects in the Oculus App Store. We were also selected for the Google Jump Start Program and which gave us access to a Odyssey 360 Camera.

Thesis

Our thesis had a number of components, and we were trying to figure out if we could drastically improve the quality to cost ratio for VR film production by introducing constraints. Ultimately we were looking to create a platform and process for profitable VR content creation (‘Netflix for VR’).

- First Person: The series is shot primarily from the perspective of the protagonist, which allows the viewer to experience the story from their perspective. We thought that stereo (both eyes have a different video) was important for realism as it mimics our own eyes.

- Narrative Stories: Focus on storytelling and include some light interaction and choice to give the user agency and improve immersion. Black mirror tried this approach with their Bandersnatch Series (which was produced after this).

- Freemium: Start with episodic content with the first episode free, together with an upgrade path to unlock the rest of the experience (learned from our time working on Episode)

- Technology: Build software to create proprietary production techniques that increase the quality and reduce the cost for even low end Android devices (increase the size of the audience).

Pitch

This was our short pitch for the production, called Playback:

“Playback is a revolutionary VR miniseries that pushes the boundaries of narrative storytelling and viewer interaction. It’s a cautionary tale about life-changing technological advancement and the personal and societal implications of its adoption.

You experience life through Alex’s POV as his company is about to launch their new, groundbreaking product: a device that records moments and allows people to re-live those moments in all five senses

As the story progresses, the boundary between Alex’s technology and his own reality begins to fall away, pressing the viewer to question what their own reality will mean as our world becomes increasingly virtual.”

Process

The entire process took around eight months and was broken up as follows:

Month 1: Pitch and Funding

- High Level Pitch: We wrote up a high level pitch and deck for the Oculus team who then approved a $50k budget for Playback (over another Zombie concept).

- Budget: Prepare high level budget to make sure that we could actually produce the experience with the $50k.

Month 2-3: Writing and Production Process

We split up the creative part (writing and character development) with the production part (technology, team, production process) and worked on each in parallel:

- Overall Workflow: How would our overall process work from the point of filming to getting it in a users hands. We went through this entire flow, including creating an app and deploying it to the app store.

- Vertical Slice: We picked a single scene and tried to make it look ‘production ready’ with a very short test clip. This set the quality bar for the production:

- Story arc and characters: The pitch needed to be broken down into a story, and the characters needed to be developed.

- Detailed Script: After the story, a detailed script with dialogue was prepared for 4 episodes, including choice/branching narrative.

- Core Team and Contractors: We needed to assemble a small team (which was mostly unpaid) and the core team included our Directors of Photography (Sensorium) an NYU film student, and a visual designer who worked with us at nights and weekends.

- Technology experiments: We ran lots of experiments with both hardware (e.g. cameras, rigs, lighting etc) and software (e.g. Depthkit) to figure out some of the tools that we could use during production.

Month 4: Pre-Production

- Casting: We decided to hire SAG Actors (under New Media) which meant we had access to better actors, but had to follow certain guidelines. We looked at 100+ audition tapes and 3 finalists for each major role.

- Prepare for shooting: This required a lot of work – we needed to pick venues, rehearse with actors, design the sets, and create very detailed shot lists to make sure we got all the footage we needed.

- Shoot week: We planned to shoot the whole series in one week. This was a very structured and organized period starting early in the morning and ending late at night. Our “team” included over 30 people working at different points during the week and this was the most “expensive” part of the production process.

Month 5-8: Post Production

Post production took almost half the total time, and we underestimated how long this part of the process would actually take by a fair bit.

- Shot selection: We reviewed all the raw footage and picked the shots that we wanted to use for the series.

- Stitching: For stereoscopic VR, we needed to ‘stitch’ the video and image files together and make sure that each eye had an image that did not make it look like the user was seeing double (very off putting) which was difficult and time consuming.

- Engineering: We added components like a ‘cyber world’, user AR interaction, branching narrative, and a number of performance improvements which required focused engineering and design time.

- Film festivals: We chose a few festivals and created pitch decks and submission entries for them.

- Launch and review metrics: Once launched we needed to analyze the metrics and user behaviour and compare to our original hypotheses.

Bugdet

We had a strict budget, and part of our thesis was to see how high we could push the quality bar with a fixed, restricted budget (comparing ourselves to experiences with 20x+ bigger budgets). We raised $50k from Oculus (as a grant), and we ended up very close to our original budget.

We prepared a detailed budget with 10% flex built in, and tracked the costs vs estimates meticulously in a spreadsheet. Most creative projects go over budget, and having this kind of discipline allowed us to keep costs under control and scrutinize all expenses carefully.

Given that our thesis was to try to create high quality content for a fraction of the price that was typical in the industry, it was important for us to control for costs carefully.

Technology

We tested out a number of different technologies in the production:

- Stereo 4k footage: Each eye has a different video (mimicking our normal eyes) at 4k resolution which makes it feel more real to the user. This is hard to achieve on low end devices.

- Proprietary image + video cut out system: In order to get the quality of video that we wanted, we built a system to constrain the ‘action’ to a small part of the scene and overlaid video on top of a still image. This required a very precise shooting and post production process that we created ourselves.

- Photogrammetry: We mapped the inside of one of our scenes (3d + textures) to allow transition between live action scenes and a computer generated world seamlessly.

- Volumetric video: We used Depthkit to capture volumetric video (3d), for a scene with a ‘ghost’ in the virtual world.

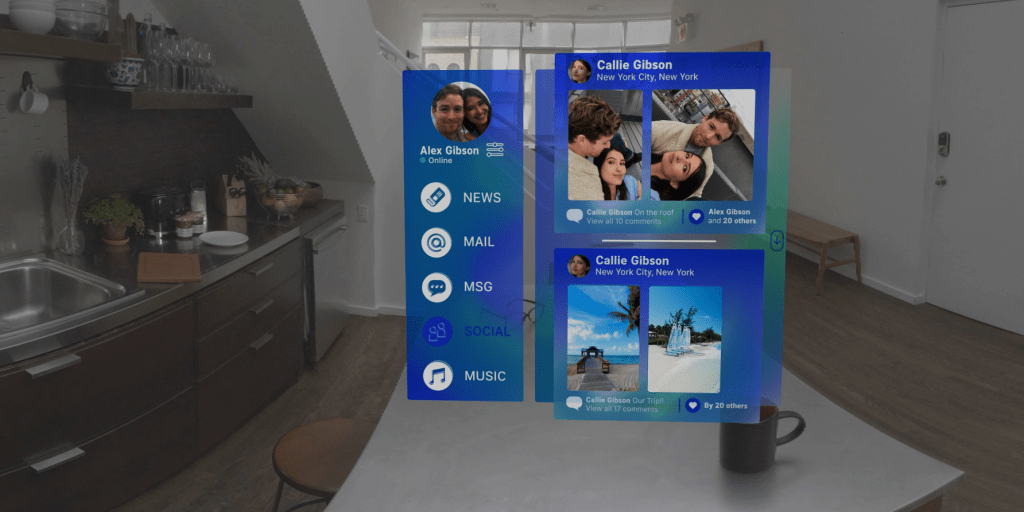

- AR Interaction: At the start of the first episode we created a HUD which the viewer could interact with – read emails, the news etc which added to the sci fi and first person interaction.

- Branching Narrative: We created choice where users could send different texts (via their AR HUD) which changed the outcome of the story depending on the choices they made.

Festivals

We created something that was both innovative in the way it approached story telling, as well as the technologies that we implemented for VR. We decided to apply to a few film festivals (Sundance, Tribeca) in their VR experiences segments. We got fairly far with Sundance but were ultimately not selected.

I went to Sundance in 2018 anyway, and had a great time skiing and watching movies. Our Directors of Photography (Sensorium) had another VR experience which was featured, so it was fun to see them experience some of their other work.

Outcome

Here is a short video showcasing the first episode (although it’s much better experienced in VR).

We released the final product in the Oculus App Store. We were featured and had reasonable download and view rates (over 15k people). Users got the first episode for free, and had to pay to unlock the next three episodes. There were some bugs in the upgrade process that negatively impacted our ratings but for people who were able to upgrade, many seemed delighted.

We were still a step function off in terms of both addressable audience as well as conversion to payer rates to justify our original hypothesis and invest further. Neither of us are still working in VR.

Learnings

- Scope creep: This was a classic mistake that we should have realized earlier (as we’ve done this many times in games) but we got too excited about some of the tools and technologies and probably added too much (e.g. volumetric video capture, branching narrative) that was not necessary to test our original hypothesis.

- Stereoscopic vs. Monoscopic: I think we should have killed the stereoscopic requirement early in post production. Stitching of video so that it does not look warped or incorrect for both eyes is a real pain, and this would have saved us a few months as well as allowed us to ship a more polished experience overall.

- Developer experience: We built most of the application in Unity and used tools like Blendr, Adobe Premiere Pro as well. The workflow was pretty cumbersome and it was fairly manual to create scenes and test out in VR. We could have built a lot of automation ourselves but it was not worth it if we were just doing it for one series.

- VR User Experience: At the time, we were optimizing for users using Oculus Go devices (Android phones strapped to your face) and this entire UX was terrible (buggy, battery hog, performance and storage issues etc). Standalone devices have improved the experience substantially, but we’re still not at the stage where this will be mainstream.

- Running out of energy: Towards the end, it became a real grind to get it out the door. Everyone was tired, and the project took 30% longer than any of us expected.

Overall it was a great learning experience but it made me realize I don’t want to work in the ‘content creation’ business especially in entertainment. I much prefer working on tools for entrepreneurs and business, and hope to spend more of my career building technology for this audience.

Leave a comment